Deliverable 4.1: Mixed method matching analysis

– Suggested methods to support the development and matching of prototypes to the different innovation regions –

| Work package | WP4: Innovation platforms for policy and business | |||

|---|---|---|---|---|

| Deliverable nature | Report (R) | |||

| Dissemination level (Confidentiality) | Public (PU) | |||

| Estimated indicated person-months | 10 person months (PM) | |||

| Date of delivery | Contractual | October 31, 2019 | Actual | October 31, 2019 |

| Version | final | |||

| Total number of pages | 91 | |||

| Keywords | Matching framework, innovation process, prototyping, methods to support prototyping, knowledge exchange and co-creation, participation, innovation teams, innovation regions | |||

List of figures

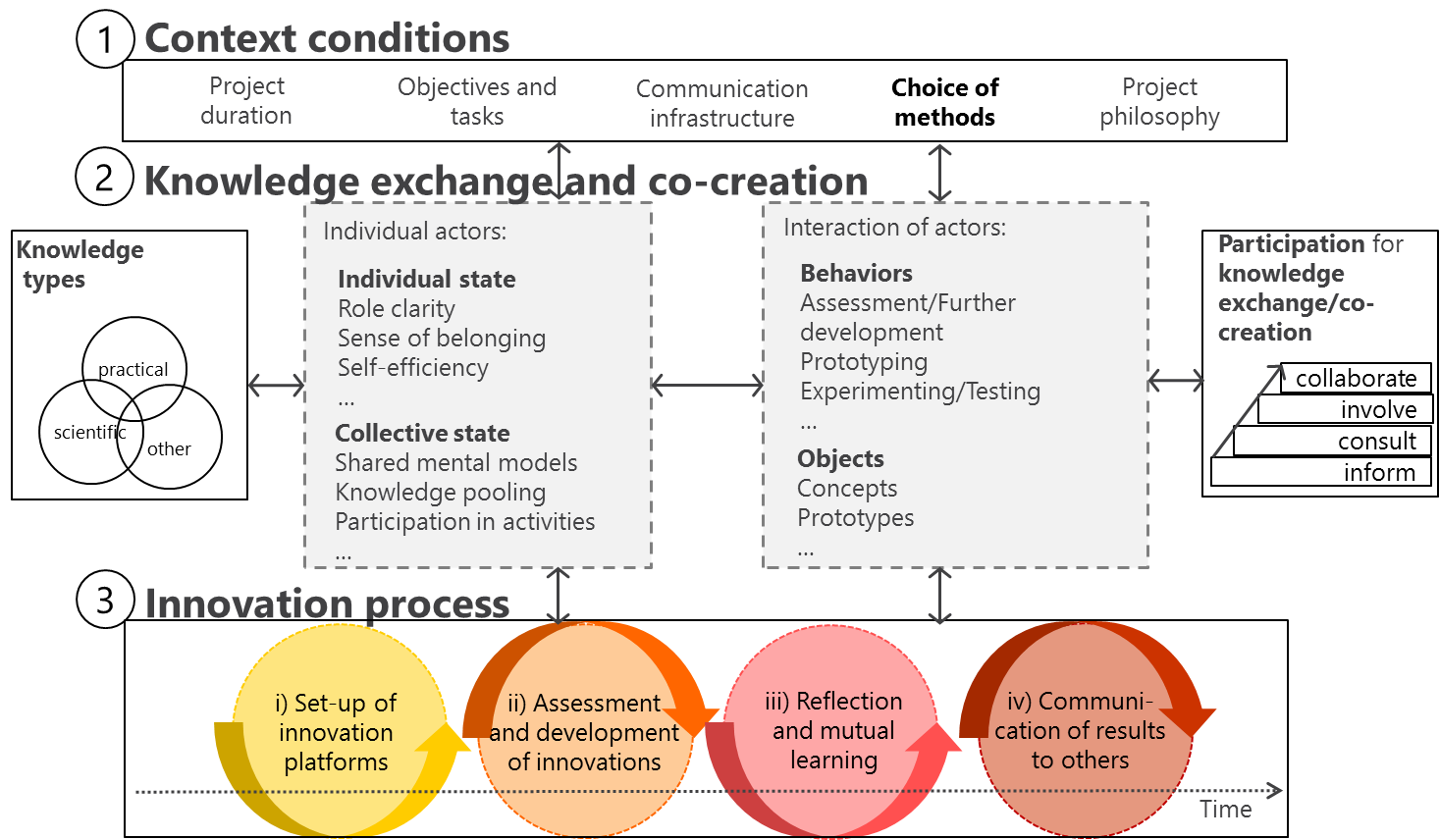

- Figure 1: InnoForESt framework for the mixed methods matching analysis (matching framework).

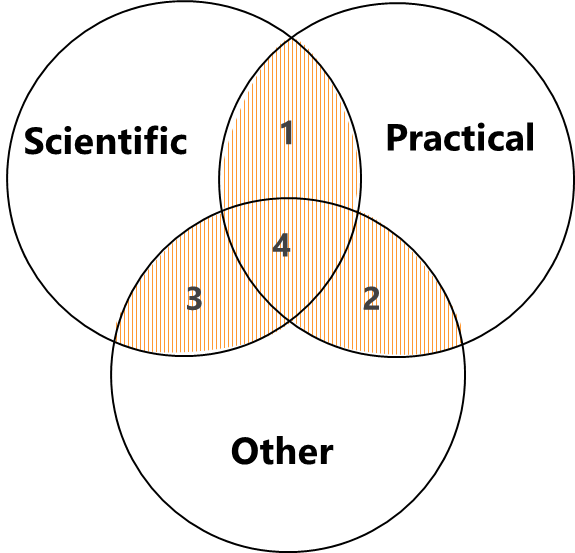

- Figure 2: Four different interfaces of knowledge exchange and co-creation.

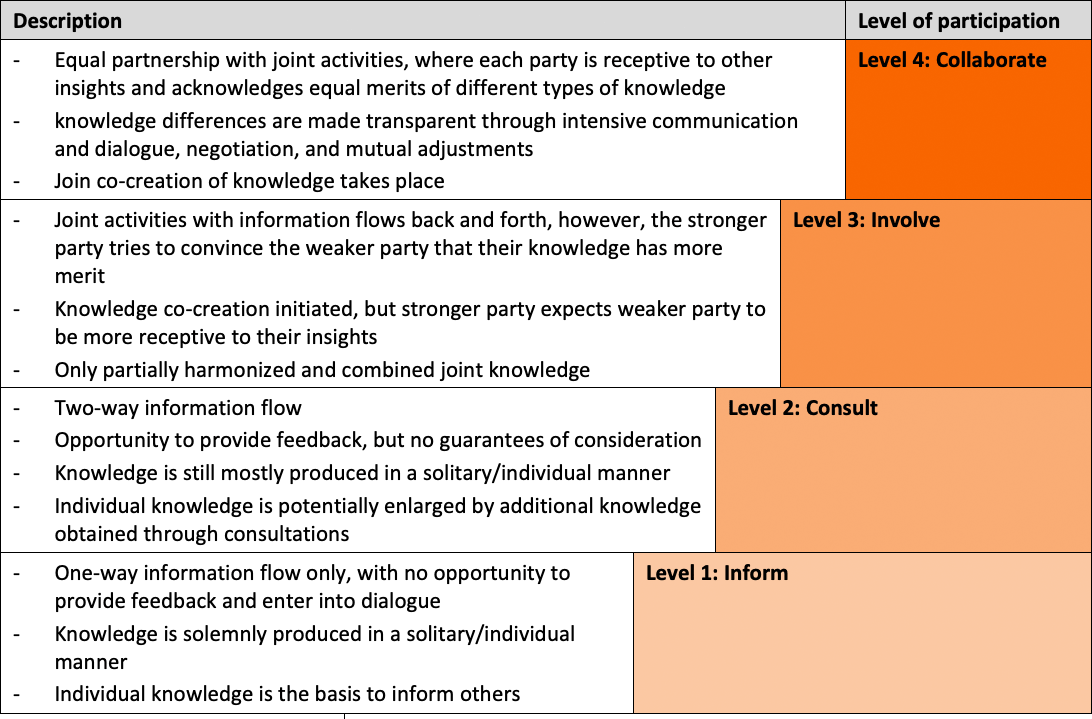

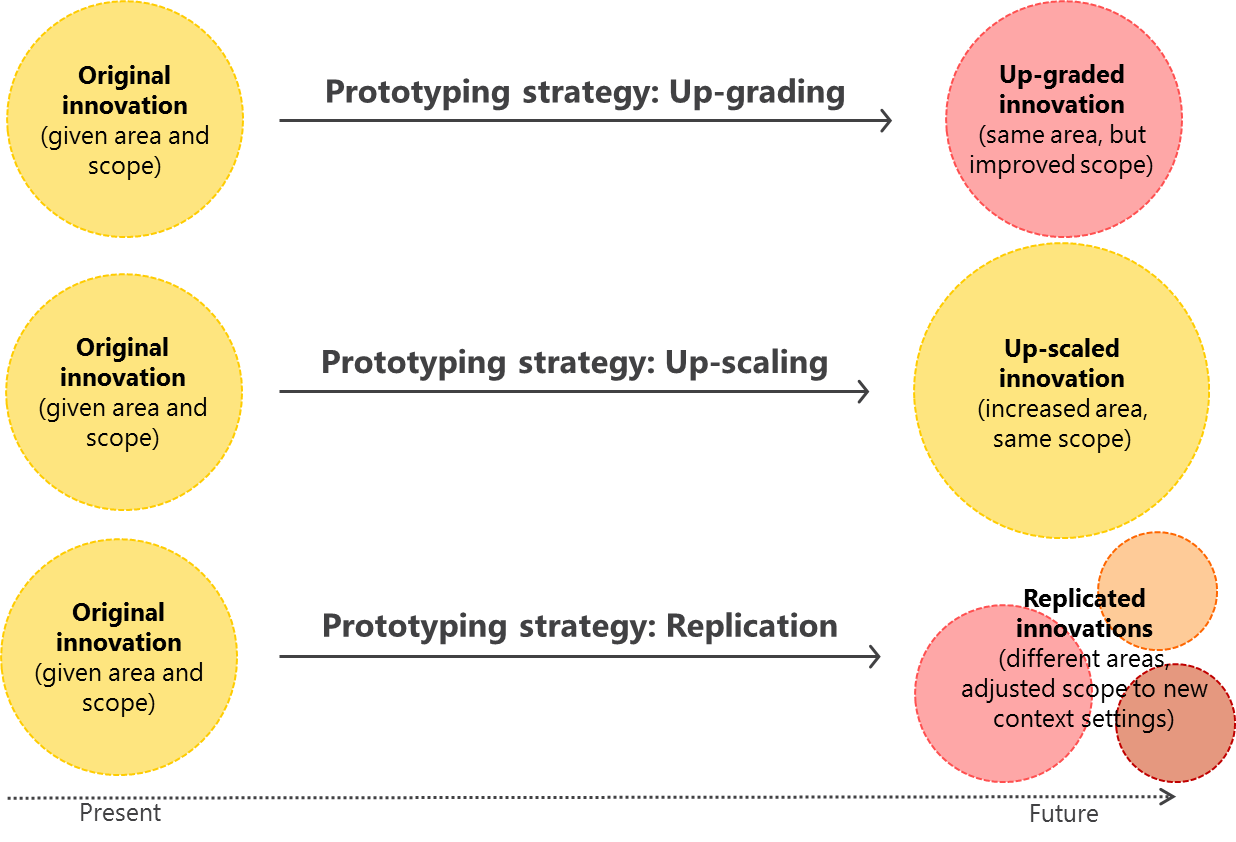

- Figure 3: Up-grading, up-scaling, and replication as three possible strategies for prototyping.

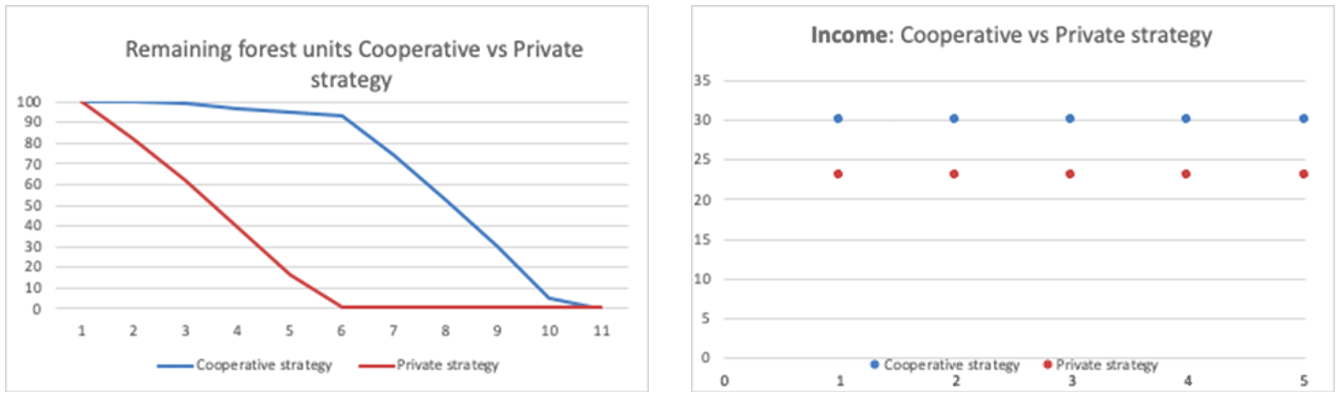

- Figure 4: Differences in private and cooperative strategies. On the left-hand side, you can see different ways of the degradation of the ecosystem services provision (from 100 resource units to 0 at the end of the game). The figure shows that the private strategy leads to earlier degradation, while a cooperative strategy leads to prolonged sustainable provision. In the right-hand side you can also see that the cooperative strategy also yields higher financial benefits.

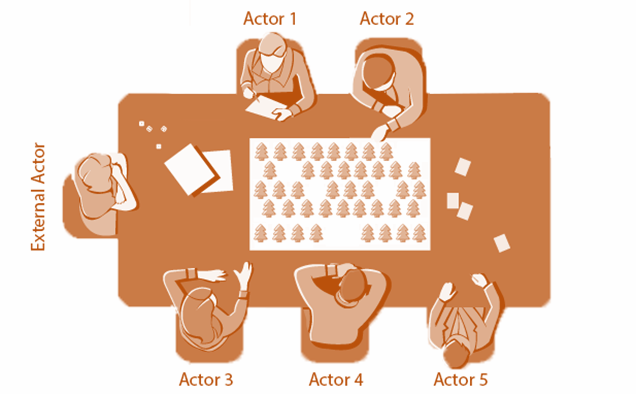

- Figure 5: Actor composition for each RBG session, with flexibility in assigning actors roles, including one actor representing an ‘external’ actor with regulatory, sanctioning, and monitoring power.

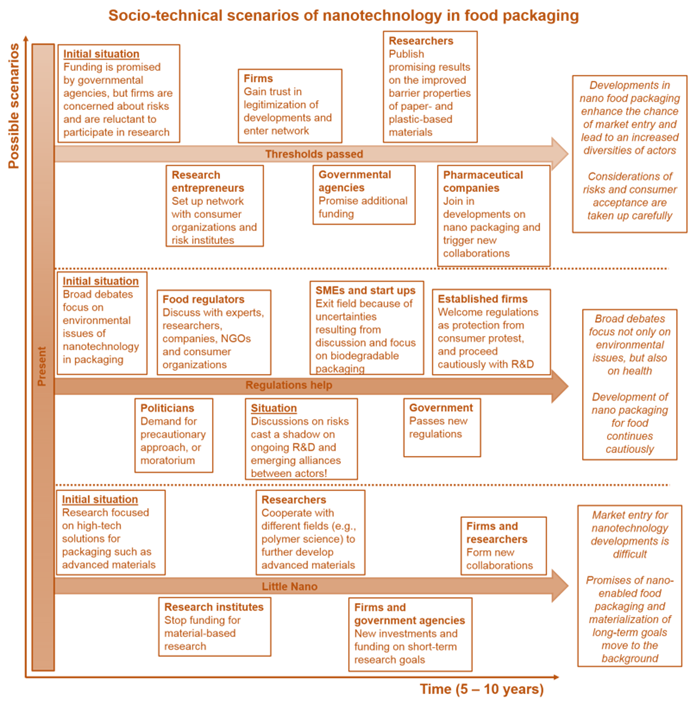

- Figure 6: CINA example 1: Research directions for a young interferometer biosensor.

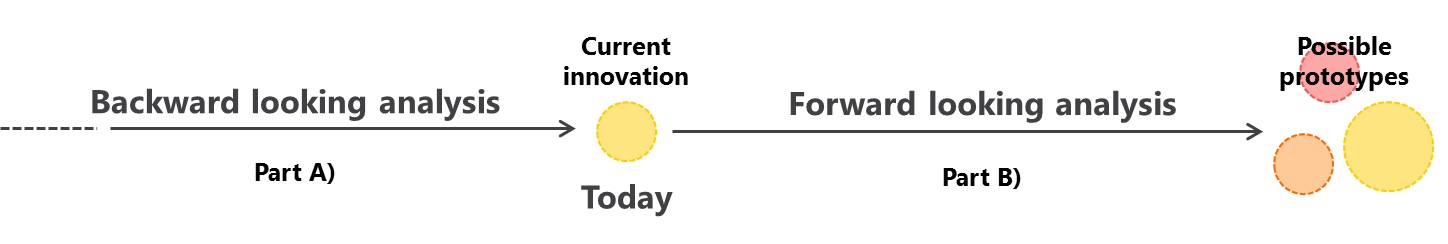

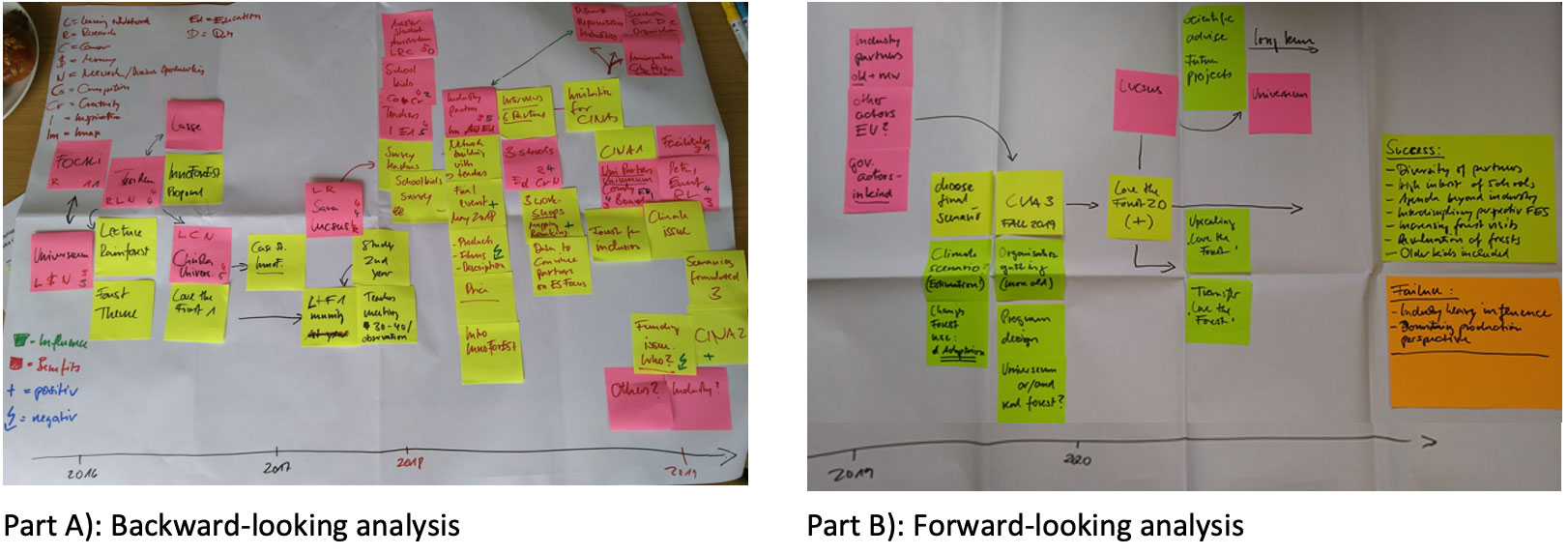

- Figure 7: Backward- (part A) and forward-looking (part B) analysis of the Process Net-Maps.

- Figure 8: Example of two Process-Net-Maps from the Swedish innovation region.

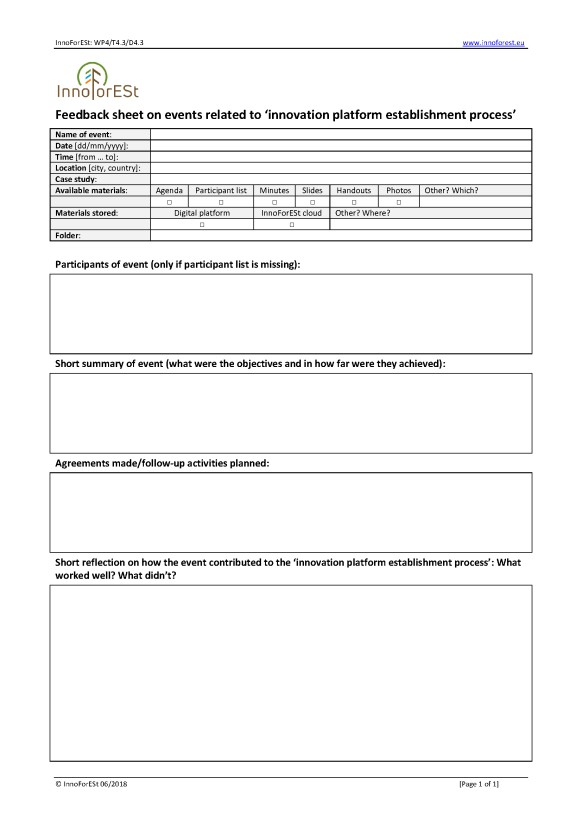

- Figure 9: Template of the feedback sheet.

- Figure 10: Map and typology of relevant stakeholders in the innovation region Austria, related to the innovation idea ‘mobile wooden houses and tourism’.

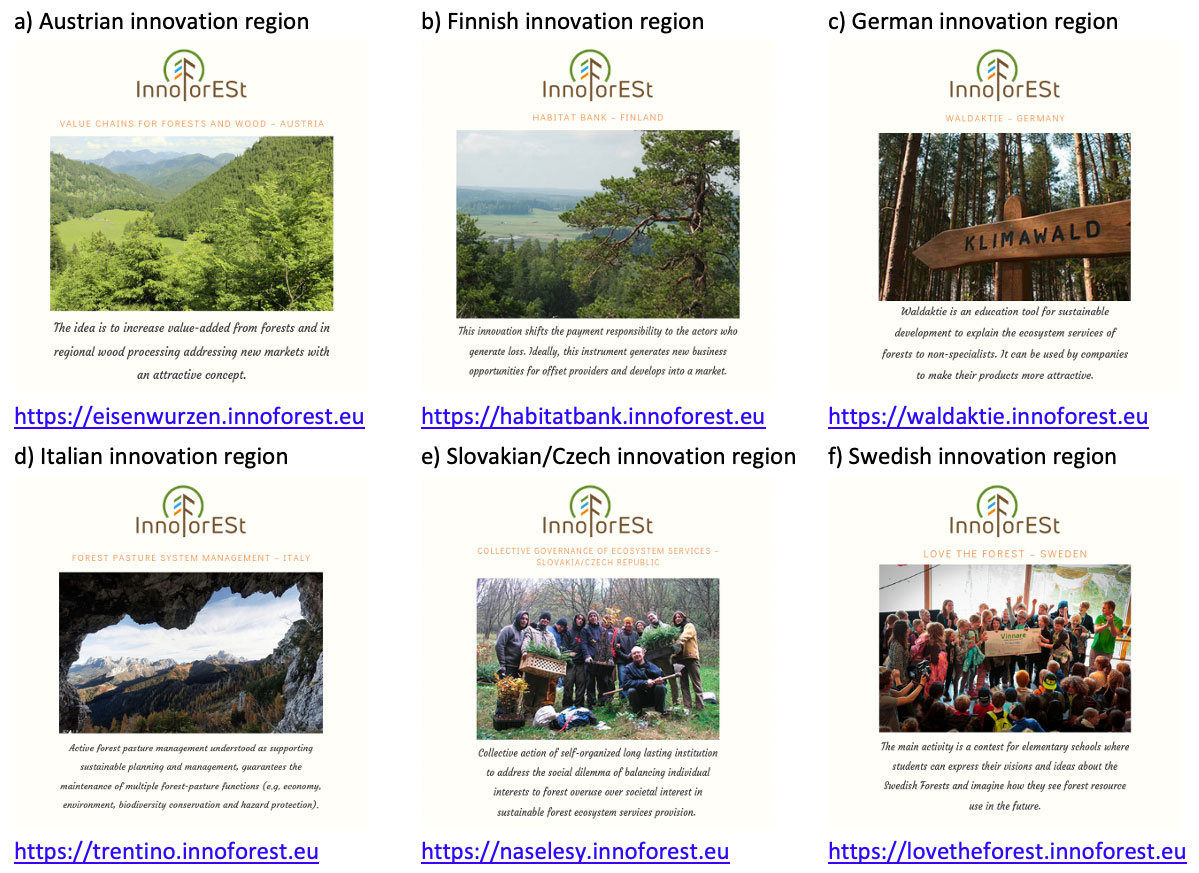

- Figure 11: The six videos produced for the InnoForESt innovation regions are all available on the InnoForESt project website.

- Figure 12: Conducting the role board game in the Finnish innovation region.

- Figure 13: Photo impressions from conducting an ‘on-the-job’ interview right in the forest.

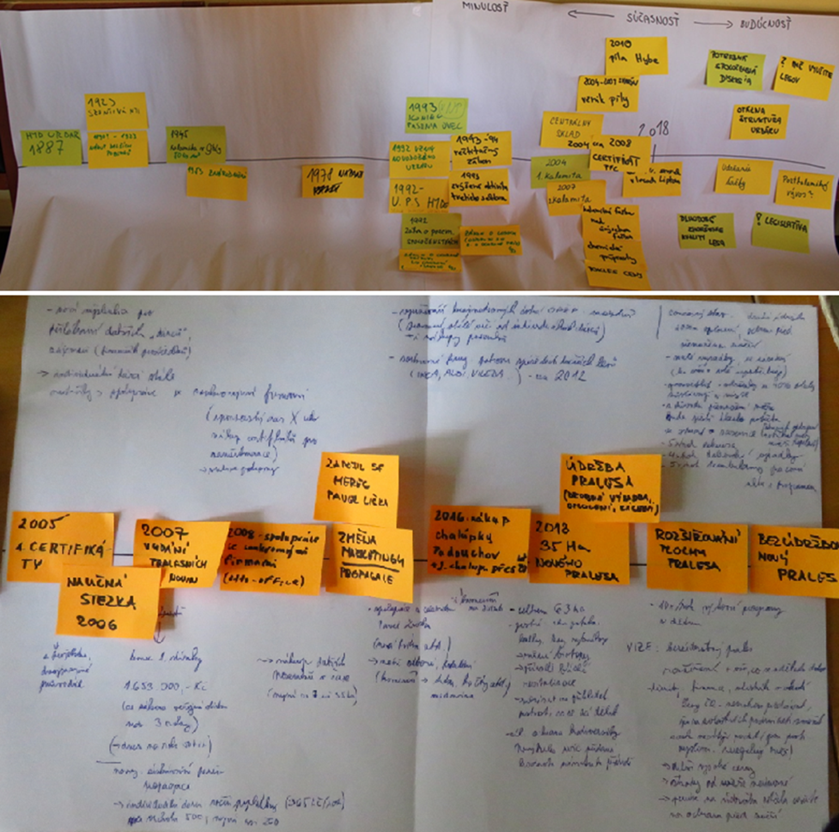

- Figure 14: Timelines of the development stages of the innovation regions (Slovak innovation region at the top, Czech innovation region at the bottom).

- Figure 15: Visual data from school 1 and school 2 conducted during a focus group in the Swedish innovation region ‘Love the Forest’.

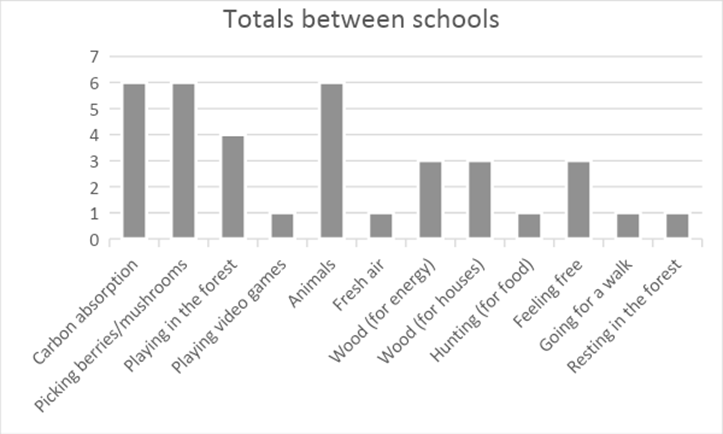

- Figure 16: Most important forest ecosystem services as selected by the students of school 1 and 2 in the Swedish innovation region ‘Love the Forest’.

- Figure 17: Photo impressions from different exercises conducted with the students during the focus groups in the Swedish innovation region ‘Love the Forest’.

List of tables

- Table 1: Description of the four levels of participation in the interaction of actors.

- Table 2: Overview of work packages’ (WP) level of support for the four phases of the innovation process.

- Table 3: Overview of which method factsheets were contributed by which work package.

- Table 4: Overview of data of five invited and three non-invited children for further QCA analysis.

- Table 5: Overview of different interview types.

- Table 6: Overview of the basic features of the methods.

- Table 7: Overview of the methods’ suitability to support actor participation, knowledge integration, and in different phases in the innovation process.

- Table 8: Overview of the methods’ suitability to support different strategies for prototyping.

- Table 9: Overview of which methods were applied in which innovation region up until now (10/2019).

- Table 10: Overview of stakeholders categories currently involved in the further development of the innovation in the innovation region ‘Eisenwurzen’.

- Table 11: Results of the thematic analysis from school 1 and school 2 following a drawing activity conducted during a focus group in the Swedish innovation region ‘Love the Forest’.

List of abbreviations

| ABM | Agent-based modelling |

| CINA | Constructive innovation assessment |

| CTA | Constructive technology assessment |

| GSA | Governance situation assessment |

| InnoForESt | Smart information, governance and business Innovations for sustainable supply and payment mechanisms for ForESt Ecosystem Services |

| QCA | Qualitative comparative analysis |

| RBG | Role board games |

| SNA | Social network analysis |

| WP | Work package |

Executive summary

This document presents deliverable (D4.1), on the ‘mixed method matching analysis’, of the InnoForESt project (www.inoforest.eu). It aims to outline all methods put forward in the six thematic work packages of InnoForESt to support the knowledge exchange and co-creation process in the selected innovation regions of the project in order to assess and further develop the existing innovations there and find one or several matching ‘prototype/s’ which describe/s their possible future development pathways, either for up-grading, up-scaling, and/or replication.

In section 1, first some context information on the InnoForESt project as a whole, the specific aims of work package 4 (WP4) and the particular focus of this deliverable D4.1 is provided.

In section 2, the framework for the mixed methods matching analysis (in short referred to as ‘matching framework’) is introduced. The framework considers three main aspects for the analysis: 1) the general context conditions as defined by the InnoForESt project as a whole giving particular emphasis to its dedication to a multi-actors approach, 2) the knowledge exchange and co-creation processes as defined by the knowledge contributions of individual actors involved into the project as well as the interaction of all these actors for knowledge trading and co-production, and 3) the innovation process presenting a phase model which distinguishes four inter-related phases in the innovation process.

In section 3, the methods used by each of the six thematic work package in InnoForESt in order to support the innovation teams in the further development of their innovations through the three prototyping strategies for up-grading, up-scaling, and/or replication are described. Altogether 14 methods are portrayed providing details on the steps involved for their application, the types of results produced, how these results can inform prototype development and assessment in InnoForESt, the particular strengths and weaknesses of the methods, software and materials needed, as well as key references.

In section 4, the 14 methods portrayed are compared against the developed matching framework. To do so, first a comparison is made in regard to the methods specific resource needs in terms of data, time, special expertise, and software. Second, a comparison is made how suitable the methods are in regard to the integration of different types of knowledge differentiating between scientific, practical and other (e.g. bureaucratic/administrative) knowledge. This is followed by a comparison of the methods in regard to ability to provide participation options for multiple actors. Third, a comparison is made in regard to how suitable the methods are to support across several phases of the innovation process. Finally, a comparison of the methods in regard to their ability to support different strategies for prototyping differing between up-grading, up-scaling, and replication is done.

In section 5, reports on the experiences that the different innovation teams have made in the application of the different methods in the selected innovation regions are presented, also highlighting how the methods were adjusted to the respective context conditions, often combining different methodological approaches.

The deliverable closes with some concluding remarks in section 6.

1 Introduction

1.1 About the InnoForESt project in short

InnoForESt is an innovation action funded through the Horizon 2020 program of the European Union. It aims to explore innovative governance approaches in the European forestry sector to spur the increased provision of a wide array of different forest ecosystem services, including provisioning, regulating, cultural, and supporting ecosystem services. To foster the development of innovative governance solutions in the forestry sector for policy and business, it draws from six already existing forest governance innovations, which are interesting for further development and to be up-graded, up-scaled, and potentially replicated. These governance innovations are located in seven European countries: Austria, Finland, Germany, Italy, Slovakia/Czech Republic, and Sweden. Across these initiatives, the project brings together a wide network of actors including science partners, such as universities and research institutes, as well as practice partners, like environmental and forestry agencies, non-governmental organizations, small-and medium sized enterprises, and forest owners and managers. Thereby, the project employs a participatory multi-actor-approach, which puts the real-life needs of the involved practice partners from the forestry and forest-related sectors at the heart of the project. The science partners support the innovation process by facilitating exchange among all involved actors for the mutual assessment and further-development of the selected and already existing innovation initiatives through suitable communication infrastructures and methods. Together, science and practice partners constitute a so-called 'innovation team' in each case study region, in the following referred to as an 'innovation region'.

1.2 About work package 4 in short

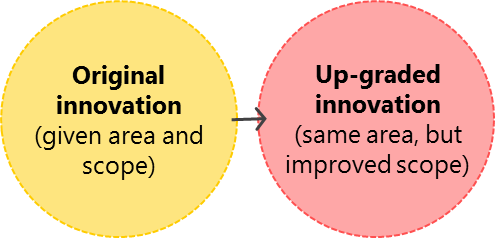

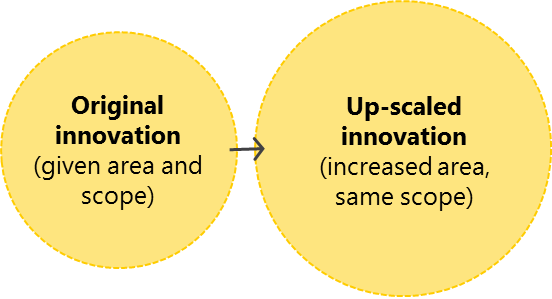

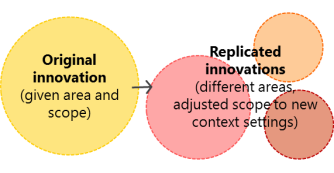

Within InnoForESt, work package 4 (WP4) is responsible for establishing so-called 'innovation platforms for policy and business' (in short: 'innovation platforms') which constitute an essential part of the necessary communication infrastructure to support the interaction of the different actors involved in the 'innovation teams' in the different 'innovation regions' . The innovation platforms consist of a physical and a digital component through which knowledge exchange and co-creation processes are supported with the aim to assess and develop the existing innovations further through so-called 'prototyping'. Prototyping involves three different strategies called 'up-grading', 'up-scaling', and 'replication'. Here, up-grading refers to improving an existing innovation within the original area and context, but with a wider scope (e.g. by including additional forest ecosystem services and products). Up-scaling then refers to transferring an existing innovation with the initial scope to a larger geographical area or higher administrative scale, still including the original region and context. Finally, replication refers to making an innovation transferable and applicable for a different region and different context, also adjusting the scope as preferred by the new network of actors.

1.3 About this deliverable in short

Against this backdrop, this deliverable D4.1 on the 'mixed method matching analysis' presents the methods put forward in the different work packages of InnoForESt to support the knowledge exchange and co-creation processes in the InnoForESt innovation teams and other project external stakeholders involved in the innovation regions in order to assess and further develop the existing innovations and find one or several matching prototype/s that describe/s the possible future development pathways of an innovation, either for up-grading, up-scaling, or replication.

Deliverable D4.1 is organized in as follows:

First, we provide some context information on the InnoForESt project (this section).

Second, we introduce the framework for the mixed methods matching analysis (in short referred to as 'matching framework'). For the development of the matching framework, we focussed on knowledge exchange and co-creation processes in the context of innovation development as well as team building, participation and learning processes in the interaction of multiple actors within the innovation teams (see section 2).

Third, we continue with a detailed description of the methods suggested and used by each work package in InnoForESt in order to support the innovation teams in the different innovations regions in the further development of their innovations by matching prototypes to their innovations for up-grading, up-scaling, and/or replication (see section 3).

Fourth, we compare the methods against the developed matching framework, giving particular emphasis to how a concrete method can support the innovation teams in different phases of the innovation process, contribute to the integration of different knowledge types, and allow for the participation of multiple actors (see section 4).

Fifth, we report on the experience that the innovation teams made in the application of the different methods in the six innovation regions, also highlighting how the methods were adjusted to the respective context conditions, often combining different methodological approaches (see section 5).

We close with some concluding remarks (see section 6).

2 Framework development for the mixed methods matching analysis

For the development of the matching framework (Figure 1), we drew from several streams of research focusing on knowledge exchange and co-creation processes in the context of innovation development as well as understanding team building, participation, and learning processes in the interaction of multiple actors (e.g. Edmondson and Harvey 2018, Prager and McKee 2015, Durham et al. 2014, Banks et al. 2013, Mauser et al. 2013, Edelenbos et al. 2011, Pahl-Wostl 2009, Smith 2009).

For the framework we consider the following three main aspects for the analysis: context conditions, knowledge exchange and co-creation, and the innovation process.

2.1 Context conditions

The context conditions (aspect 1 in Figure 1) are defined by how the InnoForESt project is set up in general. This includes the overall project duration of 36 months defining the available time frame for all activities, its main objectives and tasks, its basic infrastructure provided for communication (including the innovation platforms of WP4), the methods chosen in all thematic work packages to support the innovation process and the interaction of all involved actors (which is in the focus of this deliverable and thus highlighted in bold). Very importantly, the context conditions also include the underlying philosophy and dedication of implementing the multi-actor-approach required for Horizon 2020 projects (cf. EIP-AGRI 2017) which requires that all project activities should be tailored to the real-life needs of the involved practice partners, focusing on the further development of their innovations through up-grading, up-scaling, or replication.

2.2 Knowledge exchange and co-creation

The knowledge exchange and co-creation (aspect 2 in Figure 1) takes place between all actors in the innovation regions involved through the innovation platforms. This includes the official science and practice partners of the project, but equally important, also other project-external stakeholders from the innovation regions. Overall, involved actors can include a very diverse range of actors from different sectors (e.g. forestry, agriculture, conservation, research), covering public (governmental, state) private (market, for-profit), as well as civil society (third sector, not-for-profit) actors. Hereby, each actor brings a unique set of knowledge and experience (e.g. scientific, practical, other) into the project. This knowledge and experience is shared through the interaction within the network while at the same time new knowledge is co-created, both within the innovation regions and across. Knowledge co-creation emphasizes that knowledge is often socially constructed (e.g. Prager and McKnee 2015). Based on Edelenbos et al. (2011), knowledge co-production refers to the interaction of diverse actors with the aim of 'exchanging, combining and harmonizing elements like facts, interpretations, assumptions and causal relations from their different knowledge domains' (Edelenbos et al. 2011, p. 676). Knowledge co-creation has the potential to lead to more socially relevant knowledge, improved decisions informed by this knowledge, and, ultimately, the ability to better address complex issues (e.g. Prager and McKnee 2015).

Thereby, individual actors switch back and forth between two emergent states, an individual and a collective one (see left inner grey box in Figure 1), since knowledge co-creation refers to how knowledge is processed by the individual actors and how new knowledge is co-created through actors' interaction in a group setting (cf. Prager and McKnee 2015).

For the first, the individual state, relevant issues are, for instance, to get a clear understanding of what one's role and responsibilities are within a single innovation team and to develop a sense of belonging to this team. This includes defining for oneself, how one can best contribute to the team's goals. For the latter, the collective state, it is important to find a common language to ensure shared mental models and mutual understanding when talking about issues. This is important in order to know how individual actors' knowledge can contribute to the issues at hand, which also defines how intensive one's participation in specific/concrete activities will be. However, actors' composition often is not be stable over time, but varies along the development of the innovation, depending on what type of knowledge is needed. Thus, in Figure 1 this box is marked with a dotted line, indicating that individual actors are dropping in and out continuously. Yet, some actors stay with an innovation team constantly belonging to the core team that drives further development.

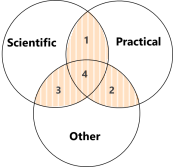

For the definition of knowledge types that individual actors can contribute to the advancement of the particular innovation, we make a difference between scientific, practical, and other knowledge (left outer box in Figure 1).

Scientific knowledge (often also referred to as expert knowledge, cf. Prager and McKee 2015, Edelenbos et al. 2011) is mostly the outcome of scientific fact-finding and research. Thus, it is mainly the science partners in InnoForESt who contribute to this type of knowledge. Practical knowledge (also referred to as lay, citizen, stakeholder, or non-scientific knowledge, cf. Prager and McKee 2015, Mauser et al. 2013, Edelenbos et al. 2011) results from real-life and on-the-ground local experiences in the innovation regions. In InnoForESt, the practical knowledge is mainly brought in by the practice partners. In addition to scientific and practical knowledge, other types of knowledge might be crucial and necessary as well, but are not available within the InnoForESt innovation teams, yet. In this case, additional project-external stakeholders have to be involved from the innovation regions or beyond. They can then contribute the missing 'other' knowledge, which might include knowledge about the appropriate political-administrative procedures and how established (legal) standards and requirements can be met at national or EU level. Based on Edelenbos et al. (2011), this type of knowledge is referred to as 'bureaucratic' knowledge (also called administrative or policy-maker knowledge, cf. Prager and McKee 2015). However, these three types of knowledge partly overlap, because a single individual can contribute several types of knowledge which makes the boundaries between knowledge types somewhat hard to delineate (cf. Prager and McKee 2015). Edelenbos et al. (2011) also mention that there is heterogeneity within single knowledge types, for instance, between the knowledge that natural and social scientists can contribute to scientific knowledge.

Consequently, we differentiate between four interfaces for knowledge exchange and co-creation in our framework (see Figure 2).

| Legend:

1 = Interface between scientific and practical knowledge, corresponding to knowledge exchange and co-creation between InnoForESt science and practice partners 2 = Interface between practical and other (e.g. bureaucratic) knowledge, corresponding to knowledge exchange between InnoForESt practice partners and other project-external stakeholders in the innovation regions 3 = Interface between scientific and other (e.g. bureaucratic) knowledge, corresponding to knowledge exchange between InnoForESt science partners and other project-external stakeholders in the innovation regions 4 = Interface between scientific, practical and other (e.g. bureaucratic) knowledge, corresponding to knowledge exchange between InnoForESt science and practice partners and other project-external stakeholders in the innovation regions |

Actors' interactions (right inner grey box in Figure 1) then can be described through actors' behaviors in regards to the assessment and further development of the innovations, the prototyping for up-grading, up-scaling, or replication strategies, as well as the experimentation and testing of selected prototypes to probe if they are applicable in practice. During their interaction, actors co-create specific objects, which are helpful for their work, such as mutually-understood concepts, like the ecosystem services concept or the concept of social-ecological systems, which inform their interaction, or the defined prototypes for the further development of the particular innovation. These objects can also take the form of a boundary object (cf. Abson et al. 2014), i.e., something that is mutually shared by different communities (from practice, science, and policy), because it looks the same, but is referred to and interpreted differently by each group. Boundary objects, such as the ecosystem services concept, may appeal to different types of actors likewise and can link them together via collaboration on a common task (cf. Star and Griesemer 1989). In Figure 1, box 'interaction of actors', just like box 'individual actors', is marked with a dotted line to indicate that actors might by dropping in and out of the process of knowledge sharing and co-creation, because they might not be part of the interaction continuously.

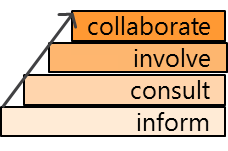

For defining different levels of actors' participation (right outer box in Figure 1) in the interaction with each other for knowledge exchange and co-creation we refer to Durham et al. (2014) and distinguish four levels: inform, consult, involve, and collaborate, where the level of participation continuously intensifies (cf. Arnstein 1969).

While 'inform' means only a one-way-information flow where one actor is provided with information with no opportunity to give feedback, 'consult' equals a situation with a two-way information flow, where also feedback is possible, although without the guarantee that feedback is always taken into full consideration when proceeding further. By contrast, 'involve' means that activities are conducted together, but one party holds relatively more bargaining power in this process, thus leading the other(s). Finally, 'collaborate' denotes equal partnership at eye level where all activities are planned and implemented together by the involved actors. Since InnoForESt employs a multi-actor-approach it has a strong focus on methods that allow for high levels of participation.

In Table 1, more detail on the differences between the four levels of participation is provided.

The general benefits of high levels of participation (cf. Smith 2009) include:

- higher credibility of the produced results, since all participating groups can contribute their knowledge,

- higher relevance of the produced results as participants can ensure that they are more likely to be tailored to their specific needs,

- higher ownership, as all actors are able to better understand underlying assumptions and conceptual models that were used to produce the results, and

- higher perceived legitimacy of decisions made based on mutually produced results, provided that decision making was also done in a participatory and democratic manner, which finally results in higher acceptance of and higher compliance with these decisions.

However, high levels of participation also come with some costs. This includes, for instance, additional time resources required for more in-depth involvement or higher expenses for travelling to attend meetings, etc. We also like to emphasize here that a high level of participation is not always needed from all actor groups and that the level of involvement is the outcome of a negation process taking into account actors' needs and availabilities.

2.3 Innovation process

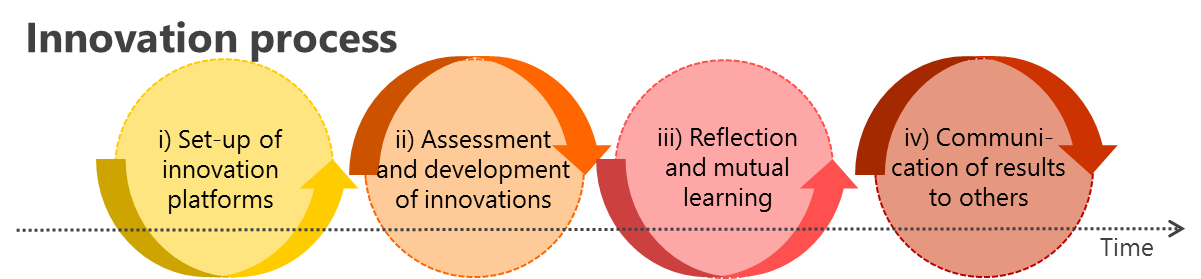

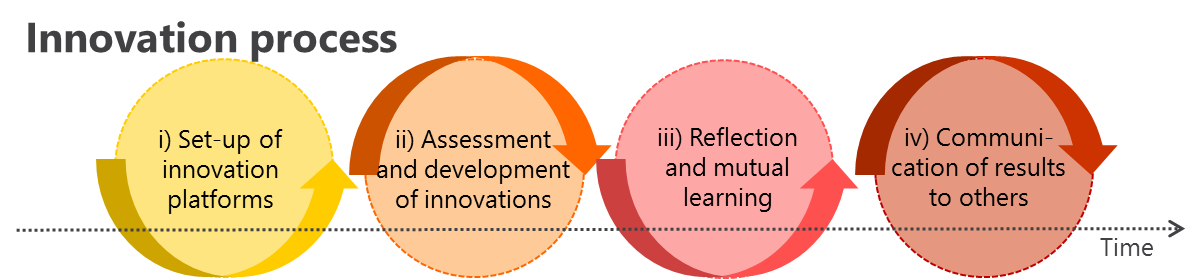

To describe the single steps in the innovation process (aspect 3 in Figure 1), for WP4, we roughly distinguish four phases:

- phase i): set-up of the innovation platform,

- phase ii) assessment and further development of the innovation (including prototyping),

- phase iii) reflection and mutual learning, and

- phase iv) communicating results to the outside world.

For each phase, the different work packages of InnoForESt are meant to support the activities of the innovation teams through specific methodological inputs (see also Table 2 below).

In phase i), to create the necessary communication infrastructure for the interaction of all team members, the innovation platforms were set up. For each innovation region, they always consist of a physical and a digital component.

The physical component of the innovation platforms exists through a diversity of different physical meetings, but also office spaces, where involved actors in each innovation region can meet in person to hold smaller and bigger work meetings as well as workshops (e.g. CTA/CINA workshops, see more details provided in section 3). Participants of these events can include official members of the innovation team (both science and practice partners), but very importantly also project-external stakeholders from one/several innovation region/s or invited experts from outside these regions, including national or international experts. Physical meeting spaces are, for instance, the offices of the InnoForESt practice partners, but also additionally rented venues for accommodating workshops. Meetings can be strategically planned (e.g. CTA/CINA workshops), but also include many smaller complementary and spontaneous meetings. These are organized based on the needs resulting from the ongoing innovation process and the work routines of the practice partners. The physical component also included basic office tools for communication (e.g. a landline, fax machine)

The digital component of the innovation platforms serves as a virtual space for knowledge exchange and co-creation and is realized as sub-menus linked to the InnoForESt website (www.innoforest.eu, please see specific sub-menus under the main menu 'Innovations in focus'). It supplements the physical component of the innovation platforms to allow also for exchange through digital means. It is further subdivided into: i) a general part which is open to the public to present the innovation in the respective local language and in English, and ii) a protected part that each innovation region can use for managing the innovation process and sharing information internally with their local network of stakeholders (mostly in the local language).

In phase ii), for the assessment and further development of the innovation in each innovation region, the innovation teams together with project-external stakeholders first investigate the strengths and limitations of the current status of their innovation and then start out to define one or several prototypes for its further development. A prototype can be understood as a vision of an innovation that describes the future development of the innovation. It can be understood as a scenario, model, sample, or early release of a product built to test a concept, process or production procedure in order to study and learn from it. Future development directions are agreed upon by the innovation teams together with other involved project-external stakeholders in the innovation regions. Thereby, a new prototype is based on the re-configuration of the defining factors of the innovation, which is assumed to improve the profile of the original innovation.

Altogether, three different strategies for prototyping of the already existing innovations can be differentiated (cf. Maier and Grossmann 2019, InnoForESt deliverable D6.2, for a more detailed description of the different strategies, including examples from the innovation regions):

- Up-grading, which refers to improving an existing innovation within the original area and context, but with a wider scope (e.g. by considering additional forest ecosystem services, improving the quality of the provided services and related products, further developing the chosen governance mechanism, or improving marketing strategies to attract further customers).

- Up-scaling, which refers to transferring an existing innovation with an initial geographical scope to a larger geographical area or higher administrative level, still including the original region and context (e.g. by raising demand for the provided forest ecosystem services and related products beyond the original area, engaging additional stakeholders with similar interests to increase the supply, including additional forest ecosystem services from another area to create a wider portfolio of offered services).

- Replication, which refers to making an innovation transferable and applicable for a different region and context, also adjusting the scope as preferred by the new network of actors (e.g. by designing communication measures to make others aware of the original innovation in order to make them think about copying and transferring it to their own region, providing guidance and advice to the new set of actors on how to adapt it to their specific context conditions in case it cannot be copied one-to-one, and replicating a funding mechanism for a new forest ecosystem service in the new area)

Figure 3 depicts the differences between the three prototyping strategies. However, hybrids of these strategies are possible.

In phase iii), reflection and mutual learning is supported, both between the different innovation regions, but also between the different work packages. In WP4, this is encouraged through regular exchange of experiences among teams, for example, by conducting cross innovation region and cross work package Skype meetings about every three months, and also by stimulating the innovation teams to reflect and document their positive as well as negative experiences. To do so, different templates (e.g. the feedback sheets, see section 3.8) are provided. In this way, the documented reflections can offer inspiration and learning possibilities for the other teams. According to Pahl-Wostl (2009), learning takes place at multiple levels as well as in multiple loops, introducing a concept for triple-loop learning, which seems also useful in view of the prototyping. While the first loop of learning aims at improving something that already exists, the second loop of learning aims at reframing, and, finally, the third loop calls for transformation. While the first loop seems mostly relevant in view of the prototyping strategy of up-grading', second- and triple-loop learning seem necessary for the prototyping strategies of up-grading and replication.

In phase iv), results are communicated through different communication channels, including the physical and digital components of the innovation platforms. Applicable communication formats are diverse and include a wide array of written, audio, and video materials.

Altogether, we like to emphasize here that all phases do not necessarily happen in a linear way, i.e.one after the other, but usually rather in parallel, interconnected by many feedback loops, resulting in several iterative cycles (as indicated in Figure 1 by the cyclic arrows).

In all four phases, the innovation teams in the different innovation regions were supported by the activities conducted in the different work packages of InnoForESt, each work package with another particular focus. Table 2 provides an overview which work package is supporting in which phases through specific methodological input. The degree of shading thereby serves as an indication for the intensity of the support.

|

||||

|---|---|---|---|---|

| WP2: Mapping and assessing forest ecosystem services and institutional frameworks |

||||

| WP3: Smart ecosystem services governance innovations |

||||

| WP4: Innovation platforms for policy and business |

||||

| WP5: Innovation process integration |

||||

| WP6: Policy and business recommendations and dissemination |

||||

| Legend: | ||||

| = work package of low support in the respective phase | ||||

| = work package of moderate support in the respective phase | ||||

| = work package of high support in the respective phase | ||||

| = work package without specific support in the respective phase | ||||

3. Description of suggested methods for the matching analysis

In this section, we present a detailed description of the methods suggested and applied by each work package to support the innovation teams in the innovations regions in the further development of their innovations and matching prototypes.

For each suggested method, a factsheet is provided which is structured in a similar way based on a template containing the following headings:

- Name of method

- Author/s of method factsheet

- Short description of method

- Steps involved

- Results produced

- How results can inform prototype development and assessment in InnoForESt

- Strengths and weaknesses of the method

- Software and materials needed

- Key references

- Contact info of InnoForESt experts who can be contacted to obtain further information

The inputs for the method descriptions were provided by the individual work package teams of InnoForESt and the respective authors are specified for each sub-section.

Thereby the teams were free to either describe the method in more general terms also pointing out alternative ways in their application, or focus the description on how the method was specifically applied in InnoForESt.

Table 3 provides an overview of which methods were contributed by which work package(s).

| Work package | Contributed method factsheet(s) |

|---|---|

| WP2: Mapping and assessing forest ecosystem services and institutional frameworks |

|

| WP3: Smart ecosystem services governance innovations |

|

| WP4: Innovation platforms for policy and business |

|

| WP5: Innovation process integration |

|

| WP6: Policy and business recommendations and dissemination |

|

3.1 Institutional and bio-physical mapping

Author/s of method factsheet:

Liisa Varumo and Eeva Primmer

Short description of method:

In response to the task of mapping and assessing forest ecosystem services and institutional frameworks (WP2) the decision was made to analyze policy strategies, laws, and other policy documents at regional, national, and EU level. For the countries of the innovation regions and at EU level, at least three documents which included the forest strategies and laws, as well as the biodiversity and bio-economy strategies were analyzed. For additional European countries only the forest strategy or equivalent was taken into account. Based on the factors/variables that were to be analyzed from the documents we constructed a questionnaire for the analysis. Thereby, for the persons who filled in the questionnaire, the aim was not to complete the questionnaire based on own personal knowledge, but strictly limited to the information presented in the policy documents. All information from the questionnaire was then fed into a database. In preparation of the questionnaire, all factors/variables (e.g. which types of forest ecosystem services, governance actors, governance and forest management systems to consider) were developed together with all WPs to ensure that they would be as coherent as possible across WPs. The questionnaire included also some background data of the documents. The questionnaire was designed to have mainly multiple-choice questions producing quantitative comparable data across regions, but also open questions for more detailed qualitative answers were provided. Per analyzed policy document, the questionnaire was to be filled in 10 times, once each for a total of 10 forest ecosystems services taken into account. As an output, a comprehensive database in Excel format was created which prospectively could be used to create spatial maps that also could be overlaid with the additional spatial information from biophysical mapping.

Steps involved:

- As a first step, we conducted a quick scan of academic and grey literature on existing comparisons of forests and forest ecosystem services relevant policy documents and forest management systems to organize the development of the database and determine the appropriate data sources (e.g. Bouwma et al. 2018). From this initial scan we got a basic idea on how the analysis could be conducted and what types of documents could serve as the basis for our analysis.

- In a second step, the method for data collection was developed with a very strong focus on the envisioned final output, a database, and its technical usability for InnoForESt. Initially we considered having people fill in directly an Excel sheet with the factors, but the questionnaire was seen as a more user-friendly approach to collect the data. We also considered if other sources than policy documents could be used, i.e. personal knowledge, knowledge already contained in academic literature, etc., but eventually decided that focusing the analysis on policy documents would generate the most comparable data across the innovation regions.

- In a third step, the method for retrieving the data entries from the policy documents was decided upon. Here, the person who was going to conduct the document analysis was instructed to first read each document in one-go to get a general idea about its core contents. Then, the person was to re-read the document once again, and while re-reading it, retrieve all the relevant information for one of the 10 concrete forest ecosystem service simultaneously completing the questionnaire. Thereby, each person was free to decide, if they preferred to either first complete the analysis in a word document with the questionnaire and then enter the responses into the online questionnaire or directly fill in the online questionnaire. For detailed instructions for the step-by-step document analysis a manual was created.

- In a last step, all information from the questionnaire was entered into the database from where we extracted all information necessary for the final analysis of comparing the analyzed regions (see below).

Results produced (examples):

As mentioned above, the final outcome produced is a database in the format of an Excel sheet which contains mainly quantitative information which was further analyzed in SPSS to create cross tabulations showing the different factors/variables and so see which of these factors/variables show correlations (or a lack thereof) between them. This information was then used for a preliminary and rather speculative analysis of how the different analyzed policy documents might aid or guide in the future emergence or development of innovations and different governance mechanisms.

How results can inform prototype development and assessment in InnoForESt:

Once the database is usable online, the InnoForESt team members should be able to search for different factor combinations to see what types of innovations exist in the presence of which factors or combinations thereof, also in regard to the biophysical conditions. For example, one could search for a certain actor, certain forest ecosystem service(s), or biophysical condition and then see what types of innovations (if any) are present in the database or vice versa, or one can search for the innovation and see what factors are present in its respective region. To enable such a search mechanism, the platform, user interface, and the overlaying of the two mappings are still under development.

Strengths and weaknesses of the method:

Strengths:

- Based on the manual, further iterations of the questionnaire to analyze additional policy documents is straightforward and rather self-explanatory

- The structure of the database also easily allows for the integration of additional factors/variables to be considered for the analysis

- Altogether, a low level of expertise is required to do the analysis by following the instructions laid out in the manual

- The method allows for making timelines where you could see how past strategies have addressed different forest ecosystem services and factors related to them, and construct thus 'pathways' or trends. However, not all countries have previous versions of the documents if, for example, the sector is quite new. This would restrict the options for this type of analysis further.

Weaknesses:

- A challenge of the method is calibrating the responses when several people are doing the document analysis. Even if you instruct people to only respond to the questionnaire based on the content of the document, previous knowledge will likely influence the interpretation.

- In questions where you had to give a weight/importance to, for example, an addressed forest ecosystem service, the question 'compared to what?' easily came to mind: a higher weight compared to other mentioned forest ecosystem services in the document or compared to other strategies, countries, etc.?

Software and materials needed:

A manual was produced as the database questionnaire was developed. The manual includes some clarifications to key terms (such as innovation) and examples on most questions and responses and how to evaluate/give weight to the factors/variables on ordinal scales. For the first round of analysis three online training sessions were organized for people doing the analysis. In these sessions, we aimed to also improve calibration by having everyone analyze certain forest ecosystem services from the EU Forest Strategy and then discussing whether we had given similar responses in the questionnaire.

The software used for the questionnaire was an online survey tool called Webropol (https://webropol.com), which requires a license. The strength of the software is that it allows for very diverse question types and the outputs can be filtered and extracted in diverse formats such as *.csv, *.xcl, *.doc, or *.pdf. For the analysis of the quantitative outputs the software SPSS was used. For the analysis of the qualitative outputs created by the open questions the responses were extracted and analyzed and coded in Microsoft Excel.

Key references:

Contact info:

- Eeva Primmer: eeva.primmer@ymparisto.fi

- Liisa Varumo: liisa.varumo@ymparisto.fi

- All contributors of D2.1 (cf. Primmer et al. 2018), who participated in the document analysis for the institutional and bio-physical mapping for the individual innovation regions.

3.2 Role board games

Author/s of method factsheet:

Martin Spacek, Veronika Gezik, Tatiana Kluvankova and Viera Bastakova

Short description of method:

It is extremely difficult to predict human behavior because human decision-making is affected by a wide range of aspects and preferences. One of the tools that can be used to reduce the insecurity of designing new governance measures and mechanisms is the application of experiments and games in both laboratory and field conditions. With their application it becomes possible to confirm or reject the predictions of a certain behavior. In the case of role board games, the benefits extend to supporting education and raising awareness of studied issues. In social sciences, experiments are usually used to analyze and predict individual and group behavior in a controlled situation and test novel instruments and decision tools in real-world situations.

Experiments enable testing, i.e. verifying or falsifying a hypothesis based on potential causal relations in a particular situation. They allow for studying behavioral reactions to changes in variables. A laboratory experiment is repeatable under the same, well-defined and measurable conditions as the procedure of the experiment is described accurately and in detail. A field experiment, on the other hand, takes place in the natural environment of the participants where they feel more at ease and which is familiar to them. Experiments usually provides 'yes' or 'no' answers. Against this backdrop, role board games (RBG) can help to overcome some limits of experiments and enable to study wider consequences and provide at least a partial answer to 'why'. RBGs can also be repeated under controlled conditions, but increase the validity of experiments in real-life situations.

Steps involved:

The application of the method is divided into two main steps: i) designing and adapting the game to a specific context, and ii) its implementation in a given context.

For the first step, i) designing and adapting the game to a specific context, the RBG can be adapted from an already existing experimental design (following the approach outlined by Castillo et al. 2013). Therefore, the logic of the experiment is discussed with the partners in the field and is adapted based on their knowledge. Adaptation is focused mainly on the context, composition of the key roles of stakeholders as well as on the specific innovation factors and approaches in each group. Despite the adaptation, the research team has to keep the basic parameters of the game unchanged for comparability of the results and its replications. The whole process of adaptation takes approximately three months. Before its own application a pilot testing of the game design is needed.

The second step, ii) the implementation in a given context, requires the involvement of at least one moderator to guide the game and one assistant (ideally two of them) for calculation of the game results and collection of the answer sheets or documentation of the narratives. This step involves the following sub-steps:

- Preparation for the game – preparation of the board with resource units, players' cards, decision sheets, etc. (30 minutes)

- Explanation of the rules (15-20 minutes).

- Game playing (60 minutes).

- Short survey on decision reasoning and calculation of the group results (5 minutes).

- Focus group discussion: game results and comparison (25 minutes).

- Payment of earnings to stakeholders (5 minutes).

It is recommended that during the game an assistant and a moderator are taking notes, especially about the mood of the participants or their interest in the game, potential disturbances (outside noise, weather, etc.), interpersonal relations among actors (e.g. whether some of them are friends or colleagues), and the activities of individual players.

Results produced (examples):

The method provides both quantitative and qualitative results. Qualitative results are coming from the analysis of the discussions and interactions of the players during the game as well as from the following focus group where results from the game are discussed, looking at different game stages, interactions among players, and the differences to the reality. Quantitative results show the comparison of the cooperative and private (Nash equilibrium) strategy with the real strategy of the group of players during the game. In the forest context, the results display the harvesting strategy, income of the group or remaining resource units throughout the whole game. When the number of participants is high enough also statistical analysis can be applied to compare the effects of different factors and/or the results between different groups of actors.

How results can inform prototype development and assessment in InnoForESt:

In InnoForESt, the RBGs are meant to test the re-combination of innovation factors in a real-world setting and its effect on actors' behavior. It is part of the prototype development for the different existing innovations. It enables stakeholders from the innovation regions to test different prototyping strategies and learn about their potential effects, discuss necessary adaptations to the respective context conditions, and for increasing collaborative capacity and trust.

In order to get a better understanding of the impact of key innovation factors for the innovation regions, we designed a behavioral [lab] experiment in the form of a RBG that is specifically tailored to InnoForESt.

The main question addressed by this RBG is:

- How to create conditions to enable innovations for sustainable use and well-being in innovation regions under the diverging interests of forest ecosystem services users?

We plan to test combinations of key innovation factors for innovation prototype development as preferred future prototypes for sustainable forest ecosystem services provision in the innovation regions. Thereby, we consider different policy interventions, such as strict regulation vs. various types of payments for ecosystem services schemes, business incentives and external risk factors, such as climate events, depopulation, migration, and market risks). The proposed design for the RBG builds on Cardenas et al. (2013) and Castillo et al. (2011) as an interactive agent-based model arranging for repeated interaction and learning in real-world situations. It contributes to testing the effectiveness of different interventions for the sustainable provision of forest ecosystem services and the acceptance of such interventions by forest ecosystem services users.

The RBG sessions create a situation in which a group of five forest ecosystem services users representing different actor groups (see Figure 5) makes mutual decisions about the use and management of a forest ecosystem and the related forest ecosystem services provision. In the game, one of the actors, for instance, will be representing an 'external actor' representing an authority (e.g. national park, regional office, government, or bank) external to forest use but with regulatory, sanctioning, and monitoring power. The role of other actors can be adjusted to the needs of the specific innovation region.

During each session, the players are then confronted with fostering or hindering context conditions (e.g. in regard to the local climate, economy, governance, rules restrictions, and innovation potential) as well as different actors' values and interests. The actors will face a change in the conditions/factors (individual/collective action, diversity of rules, innovation factors, external events and disturbances, etc.) and will be able to observe/test what conditions lead to a more successful collaboration for sustainable forest ecosystem services provision in the specific context in the innovation regions. This approach will create a space to test innovation activities for prototype development (reflecting the three prototyping strategies up-grading, up-scaling, or replication).

Strengths and weaknesses of the method:

Strengths:

The method can be used as a learning tool for the involved players which allows for studying the effects of particular interventions on the decision making and behavior of the different actors represented. It provides the researcher with a high level of control over different variables involved and provides specific results and conclusions. The experimental design also allows the replicability of the method.

Weaknesses:

Only a limited number of the potential factors that could be changed can be studied in each session. Also, sometimes it can result in unrealistic results due to human errors or to artificial situations (which are not present in a real-life environment). Furthermore, the design of the game requires expertise in this particular field of research, and the implementation of RBGs is time consuming. Experimental research itself does not provide an explanation of the results. Limitation of the experiment is also a lack of external validity given by the simplicity of the design, its abstract nature, the limited number of participants and the knowledge types they represent, and the short time period in which decisions have to be made.

Software and materials needed:

- Playing board with gaming material

- Tool for calculation of probability (e.g. different types of dices)

- Software for calculation and display of the results (it can be done by MS Excel where there is predefined the functionality based on the automatic calculation of the results). Alternatively it can also be done only on paper.

Key references:

Contact info:

- Tatiana Kluvankova: tana@cetip.sk

- Martin Spacek: admin@cetip.sk

- Veronika Gezik: veronikagezik@gmail.com

3.3 Constructive innovation assessment

Author/s of method factsheet:

Peter Stegmaier, Ewert Aukes and Christian Schleyer

Short description of method:

The method constructive innovation assessment (CINA) is based on a methodology previously developed for assessing newly emerging technologies called constructive technology assessment (CTA). CINA/CTA is inherently adjustable to different contexts of use (please see also https://cta-toolbox.nl). CINA provides a workshop-based learning forum for all participants. Though research is needed for preparing CINA workshops thoroughly and also to analyze the results, it is not only research. Rather, the main goal is to bring a variety of stakeholders together and facilitate their exploration of innovations for the governance of forest ecosystem services they find relevant.

In the case of InnoForESt, CINA is mainly about developing alternative scenarios for different governance innovations by getting all concerned actors together at an early enough stage of a development (i.e. when modifications are still possible). In doing so, concerned actors can early on 'insert' considerations into the developmental process that 'improve' what is emerging: in other words, to modulate ongoing developments through 'constructive dialogues'. Therefore, it is crucial to include all relevant actors in a given field. Relevant actors are not only all the 'usual suspects', but also those others who are not yet there, but of which an innovation team thinks they could indeed provide interesting or even decisive new impulses in terms of knowledge, information, or human and other resources. The ultimate aim is to offer the participants new, additional insights from and networking opportunities with people they would normally not talk to. Rather than feeling like research subjects answering research requests, participants should, first and foremost, experience additional value for their own purposes. In order to guarantee this, the set-up of the workshops needs to be absolutely clear about stakeholders' views and highly sensitive to their interests (please see also method description on stakeholder analysis).

Steps involved:

- Scenario development and prototyping: done by the innovation regions themselves, realistic enough and thought provoking at the same time; leaving options for assessing different paths the prototype development and side-conditions could emerge to.

- Case descriptions: broad enough, but also with detail; sound research about the structure of the situation into which the forest ecosystem services innovation shall be introduced or in which they could further be pursued in terms of the de-facto conditions, pre-history, and expected developments that actually characterize the case constellations as of now.

- Actor maps and typologies: including a list of actors that are (a) able to represent a specific stakeholder view authoritatively (expertise, specialization), and (b) able to express their views very well (skilled and interested in communicative exchange), while (c) also being capable of out-of-the-box thinking (creativity, openness). The stakeholder analysis in WP5 is crucial here.

- Prototype assessment: crucial point of reference for the CINA workshops: either in terms of scenarios for choosing prototypes most promising for further development or for assessing scenarios of conditions under which specific prototypes could be proven more or less viable.

- Training innovation teams: achieve a basic understanding of the method and comparability of workshop results; there will be reports from all CINA workshops and the individual case workshops will also be compared, as well as the method assessed

- Carry out CINA workshop: Decision on when in the process to use CINA workshops for what purpose

Results produced (examples):

In cases where CINA has already been used, the following results have been produced, for in-stance (when transferring it to InnoForESt, just replace the technical application with 'forest ecosystem governance innovation' in the given examples below):

Example 1: Socio-technical scenarios of nano-technology in food packaging

One example of a set of socio-technical scenarios has been described by Rip and te Kulve (2007) where they propose multiples futures for nanotechnology in the food packaging domain. The elaborate and complex scenarios are visualized in Figure 6 below in a simplified form.

How results can inform prototype development and assessment in InnoForESt:

The CINA workshop format is implemented as structured interactions focusing on forest ecosystem services innovation 'scenarios'. These are realistic narratives that invite all kinds of relevant stakeholders to probe conditions under which specific prototypical arrangements could be successful or leading to rather less-desired consequences (cf. https://cta-toolbox.nl/tools/scenarios/#aim). The CINA workshops will either lead to a choice of scenarios for further developing specific prototypes – or, after having chosen concrete prototypes, assessing scenarios of conditions under which the prototypes might become (more) viable.

Strengths & weaknesses of the method:

Strengths:

- Reveals underlying argumentations and potential conflicts

- Lays basis for constructive deliberative intervention

- Provides avenues for, possibly modulated, continuation of the innovation

- Can be done on the basis of existing knowledge about the situation, also through consulting case experts, although most often some additional digging and collecting of competing viewpoints is advised (scenario development)

- Requires intensive familiarization with the innovation context

Weaknesses:

- Requires basic experience in social science or policy analysis

- Is tailor-made for specific innovation context; i.e. not simply generalizable to other contexts

- Requires a sufficient level of time investment for scenario development and training to be successful and useful

Software/Materials needed:

With respect to the required entourage, CINA workshops differ only slightly from other kinds of workshops. A physical location is preferred over a virtual one. A moderator is needed who is familiar with the CINA approach and different kinds of moderation techniques. Additional material may involve documentation techniques. These may include audio- and/or video-recording, written minutes, but also visual aids such as brown paper, post-it, stickers, and pens.

Key references:

Contact info:

- Peter Stegmaier: p.stegmaier@utwente.nl

- Ewert Aukes: e.j.aukes@utwente.nl

- Christian Schleyer: christian.schleyer@uibk.ac.at

3.4 Governance situation assessment

Author/s of method factsheet:

Peter Stegmaier, Ewert Aukes and Christian Schleyer

Short description of method:

The governance situation assessment (GSA) is a descriptive tool to chart configurations of actors, institutional arrangements, policy instruments, policies, and politics in place in a certain innovation context. The GSA is not a scientific data analysis method per se, but provides a way of gaining a general overview of the real-life, on-the-ground development of an innovation (Colebatch 2009). The GSA zooms in on the political order of an innovation, how it emerges, stabilizes, is maintained, or even de-aligned. It generates the necessary understanding of the innovation's political, institutional, and physical order for a) nurturing a stakeholder network as well as b) setting up and carrying out workshops aiming at articulating and probing viable alternatives for innovating the governance of a forest ecosystem service.

In the course of the project, it has become more and more apparent that some socio-political situations are simply not yet ready for an actual innovation to be developed. It is very much possible that a process of considerable groundwork has to precede the work on actual innovations. This groundwork can include the exploration of decision-making traditions and cultures in practice, the physical bringing together of stakeholders, as well as the breaking up of entrenched structures. Working together constructively in workshops following the Constructive Innovation Assessment approach is not a walk in the park and cannot be taken for granted. Circumstances allowing for a free flow of ideas and an open discussion of possible futures sometimes need to be established in a tedious process of suggesting out-of-the-ordinary possibilities and stimulating out-of-the-box, creative thinking. Revealing such entrenched structures and the necessity to create constructive circumstances can be achieved by preparatory work through the GSA.

By means of a list of questions to be answered, the societal context of a (planned) innovation is mapped. These questions deal with a) actors, included in and excluded from current innovation processes, and what political interests they pursue, b) how, from a governance perspective, these actors relate to each other in the policy realm, c) what the history of the innovation is, d) what the current state of the innovation is, and e) which future developments are currently expected for the innovation.

Additionally, a specific aspect of the GSA is assessing which key problems actors perceive (cf. Hoppe 2010). This aims at the big picture. If one intends to develop the innovation in question in a socially accepted way with broader societal support, knowing about existing problem perspectives is useful knowledge, too. Roughly, problems may arise due to two disagreements. Either actors have not reached consensus about the norms and values relating to the innovation yet, or they may disagree about the knowledge. These two dimensions of problems lead to three types of problems. First, 'unstructured problems' describe situations in which actors neither agree on the norms and values nor on the necessary and available knowledge. Second, if there are 'moderately structured problems', actors have reached consensus either about the norms and values involved or about the required and available knowledge. Third, if consensus has been reached about the norms and values as well as the required and available knowledge, one can speak of a 'structured problem'.

All these descriptive data produce an overview of the governance context of a certain innovation. Due to their descriptive nature, they have practical as well as analytical use. On the one hand, the GSA data can directly be used by actors in the innovation context to bring other actors together and increase support for the innovation. From an analytical point of view, on the other hand, the GSA data lend themselves for further analysis with different data analysis methods, both qualitative and quantitative.

Steps involved:

- Familiarization with the generic list of questions

- Adaptation of the generic question list to the specific innovation context

- Answering the questions based on pre-existent knowledge and/or additional data generation

Results produced (examples):

The results for this method can take different forms depending on the level of data processing efforts one is willing to invest. The basic results are textual answers to the questions. However, as mentioned previously, the basic, textual GSA data lend themselves for further analysis with different methods. The data can also feed into a more extensive stakeholder analysis, certain types of social network analysis (SNA), or discourse analysis. In sum, the results of the GSA have descriptive value in itself as well as constituting a thorough qualitative data basis for further, more sophisticated types of analysis. As the method has, to a large degree, been specifically developed for InnoForESt the availability of examples is limited.

How results can inform prototype development and assessment in InnoForESt:

Carried out at an early stage, GSA helps to formulate and select alternatives of innovations. On the basis of this, a prototype can be developed by considering a realistic picture of the political/institutional conditions, under which the innovation and the prototype may be possible.

Strengths & weaknesses of the method:

Strengths:

- Provides a rich picture of the innovation context

- Enables tailoring actor's strategy to other actors in the innovation context

- Reveals underlying argumentations and potential conflicts

- Lays basis for constructive, deliberative intervention

- Can be done on the basis of existing knowledge about the situation, also through consulting case experts, although most often some additional digging and collecting of competing viewpoints is advised

- Requires intensive familiarization with the innovation context

Weaknesses:

- Requires basic experience in social science or policy analysis

- Is tailor-made for specific innovation context; i.e. not simply generalizable to other contexts

- Requires a sufficient level of time investment for data sorting and categorization to be successful and useful

Software and materials needed:

In general, the required materials comprise those necessary for interviewing, if these are even necessary. This would mean, recording devices, informed consent forms, and arrangement of privacy-sensitive interview transcription need to be organized. As regards software, it is not crucial to use a software package to sort through and eventually analyze the data. Having said that, software packages dedicated to qualitative data analysis, such as ATLAS.ti, NVivo, or similar, can be an advantage.

Key references:

Contact info:

- Peter Stegmaier: p.stegmaier@utwente.nl

- Ewert Aukes: e.j.aukes@utwente.nl

- Christian Schleyer: christian.schleyer@uibk.ac.at

3.5 Process Net-Maps

Author/s of method factsheet:

Claudia Sattler

Short description of method:

Process Net-Maps are a novel research tool for an interview-based approach for participatory mapping in social network analysis (SNA) (cf. Lubungu and Birner 2018, Zampa 2017, Raabe et al. 2010). They are a variant of the original Net-Map method developed by Schiffer (2007). While the original Net-Maps focus on the mapping of the status quo of actors' interactions in social networks, Process Net-Maps focus on the mapping of the consecutive steps of a network development process among actors against a timeline, identifying all relevant actors (e.g. idea giver and initiators, resource providers, implementers, or other) and important events (e.g. events organized, agreements made, and contracts negotiated, etc.).

Against this backdrop, for InnoForESt, Process Net-Maps are used to gain a deeper understanding of the history of an innovation process, in particular on the actors involved and the sequence of events that were organized regarding the initiation, the planning and design, and the actual practical implementation of the innovation. They are also used to learn about the motivations of the different actors' to get involved into the innovation process, their influence and power in the interaction with each other, and the benefits they obtain from their involvement. Furthermore, Process Net-Map is also suitable to identify when and why in the development process of the innovation challenges occurred and how these were addressed and overcome or mitigated by the involved actors. Besides this historic and backward-looking analysis, Process Net-Maps can also be used for a forward-looking analysis speculating about possible future development pathways of the innovation for prototypeing, anticipating which additional actors are needed and which future activities and events would be necessary to follow a specific development path.

Steps involved:

The interviews for the Process Net-Maps are divided into two parts (see Figure 7):

- Part A), with a backward-looking analysis, to understand the development process of the innovation up to the present day, and,

- Part B), with a forward-looking analysis to outline possible future developments of the innovation for prototyping.

During the interviews, in parallel to the interview conversation, the innovation process gets visualized on a large sheet of paper, the actual Process Net-Map, by putting all relevant actors and events on post-its which get placed along a timeline.

Thereby, the interviews follow the following interview guideline:

Part A): Questions for backward-looking analysis

Process mapping: Can you tell me about the story of the innovation? When did everything start? How did the innovation develop further step by step? Who were the important actors (e.g. individuals, groups of people, and organizations)? What were the roles of the different actors (e.g. innovator/idea giver, supporter/resource giver, influencer, and implementer)? What were crucial events (e.g. meetings, agreements, contracts, change in policies, and crises/conflicts)? How did these events influence the process (positive or negative)?

In this step, the actors and events get written down on post-its using a color code (e.g. actors on orange post-its, events on yellow post-its) and are arranged along the timeline in dependency on when the actors got involved and when events took place.

Actors' attributes: What was the motivation of each actor to get involved (e.g. motivated by economic, social, and ecological interests)? How influential are the different actors? Which actors obtain the highest benefits?

In this step, the interviewees are free to define the motivations which are usually displayed as icons or symbols which get drawn onto the single actor post-its (e.g. a tree for ecological, a coin for economic, and a heart for social motivations). For indicating the influence and benefits towers are stacked by the interviewees. A maximum of five stacks per tower are allowed. The higher the tower, the greater is the influence, and the benefits of the respective actor. Actors with no influence and/or benefits get no stacks on their actor post-it. The interviewees are allowed to add stacks and also take them away again until they are satisfied with their towers. Eventually the number of stacks in each tower gets also noted down on the actor post-it, again using different colors for the influence and the benefits (e.g. red for influence and green for benefit).

Events' attributes: Which events were positive and pushed the innovation forward? How exactly? Which events were negative and caused difficulties/challenges? How? What was done to overcome the different difficulties/challenges?

In this step, also symbols are used to mark the positive and negative events (e.g. an asterisk for the positive ones and a flash for the negative ones).

Part B): Questions for forward-looking analysis

Process: When you think about the next three (five, ten) years, can you tell me which steps are planned next? Which old/new actors will be important? In which role could InnoForESt support the process as an actor? What events are planned already?

For this step, usually a new sheet is used to start fresh and with lots of space. Old actors get displayed with the same color as in the backward-looking analysis. For new actors a new color (e.g. pink) is used.

Criteria of success and failure: When you think about the future, what criteria would you use to measure success? What criteria would you use to define failures?

For this step, an additional large-format post-it is added to a free corner of the Process Net-Map, on which criteria of success and failure are listed as expressed by the interviewee.

Results produced (examples):

The Process Net-Maps have two types of results: first, the actual physical Process Net-Maps visualizing all important actors and events from the backward- and forward-looking analysis, and, second, the audio-recording of the interview. Both are equally important for the analysis. While the physical Process Net-Maps hold mostly quantitative information (e.g. number and kind of actors involved, number and kind of events conducted for innovation development, number and kind of motivations for actors involvement, and number of stacks in the influence and benefits towers), the audio-recordings hold most of the qualitative information that is crucial for interpretation (e.g. why certain actions were pursued or how challenges were mastered). Figure 8 shows an example of a physical Process Net-Maps created in one interview in the Swedish innovation region.

How results can inform prototype development and assessment in InnoForESt:

Especially the forward-looking analysis (part B) is helpful to outline possible future developments of the innovation and explore different options of prototyping (up-grading, up-scaling, replication, or hybrids thereof). It can also be used to speculate about a 'best case' (complete success) and contrast it against a 'worst-case' scenario to anticipate possible obstacles and set-backs and think about how to best deal with them in advance. This way the innovation teams can create a contingency plan to get prepared in case some things will not go according to plan.

Strengths and weaknesses of the method:

Strengths:

- Yields rich data, including both quantitative and qualitative information

- Elicits implicit knowledge on all important steps in the development process of the innovation

- Helps to identify particular challenges in the innovation process and how they were dealt with

- Communicated information during the interviews gets visualized in the physical Process Net-Maps

- Allows for a high level of participation (co-creation of Process Net-Maps during the interview)

Weaknesses:

- Time and resource-consuming (interviews can take between two and four hours, transcribing interview recordings and content analysis of transcripts is very time-consuming, too)

- Ideally, the interviewee has knowledge about the whole or at least most of the development process of the innovation (initiation, design, and implementation) which limits the number of possible interviewees

- Results are highly perception-based (this is not a dis-advantage per se, though, but needs to be made transparent)

Software and materials needed:

For conducting the Process Net-Map interviews the following materials are needed: Large sheets of paper, post-its in different colors and formats, color pens, material to build towers (e.g. small wooden bricks, stackable sweets), recording device, camera (to take a picture of the physical Process-Net-Maps in case some post-its do not stay in place when transported), batteries, interview guidelines, and participant consent forms.

For transcribing the interviews the software f4/f5 can be used. For content analysis of the transcripts software such as MAXQDA, ATLAS.ti, and NVivo is helpful.

Key references:

Contact info:

- Claudia Sattler: csattler@zalf.de

- Claas Meyer: claas.meyer@zalf.de

3.6 Qualitative comparative analysis

Author/s of method factsheet:

Claas Meyer

Short description of method:

Qualitative Comparative Analysis (QCA) is seen as middle way that combines certain features of qualitative research with features of quantitative research. QCA aims to find causal relationships between cases' properties (conditions) and an outcome, like success/non-success or similar. QCA is not following a statistical logic but employs set theory, Boolean logic (Yes/No, True/Untrue) or Fuzzy algebra (degrees of membership to Yes/No or True/Untrue within a range between 0 and 1). The method focuses on the understanding of the relations between different causes and their interconnections. The basic QCA idea is an application to intermediate sample sizes (between 5 to 100) that are too small for statistical analysis and a systematic cross-comparison while keeping a relation to the single case. The central principle is 'multiple conjunctural causation', which means that not only a single variable but combinations of variables can lead to an outcome, that different combinations of variables can produce the same outcome, and, that one condition can have different impacts on the outcome, depending on the combination with other factors. QCA allows for a determination of necessary and sufficient conditions for the outcome. It reveals that a condition can be interpreted as necessary if in the case that the outcome is present, the condition is always also present. On the other hand, a condition can be interpreted as sufficient in the case that if the condition is present, the outcome is always also present (see in particular Sehring et al. 2013; Schneider and Wagemann 2012; Rihoux 2003).

Application example: The Kindergarten case

Source: adapted from Berg-Schlosser and Cronqvist (2012: 138 et seqq.)